The search landscape in 2026 is distributed across layers. Google’s AI Overviews now intercept a significant proportion of informational and commercial queries before the user reaches a traditional result. ChatGPT, Perplexity, and Gemini are being used to research purchases, shortlist vendors, and form opinions that once belonged entirely to organic search. And the communities feeding those AI systems, Reddit, Quora, LinkedIn, and sector-specific forums, are influencing AI-generated answers in ways that never appear in a crawl report.

A brand can rank on page one and still be absent from the AI Overview directly above it. It can be technically sound and still be invisible to a buyer who asked an AI assistant which supplier to consider. An audit that does not measure this reality is measuring the wrong things.

Working with Bensons for Beds through a London agency, the audit process followed what was, at the time, considered thorough practice. Technical health, on-page signals, backlink profile, competitor gap analysis. The deliverable was comprehensive by the standards of the day. What it did not contain was a prioritised roadmap that the marketing team could translate into decisions. The findings were accurate. The direction was absent. That pattern did not begin or end with that engagement. It is the default state of the industry.

The Terminology Shift That Changes Everything

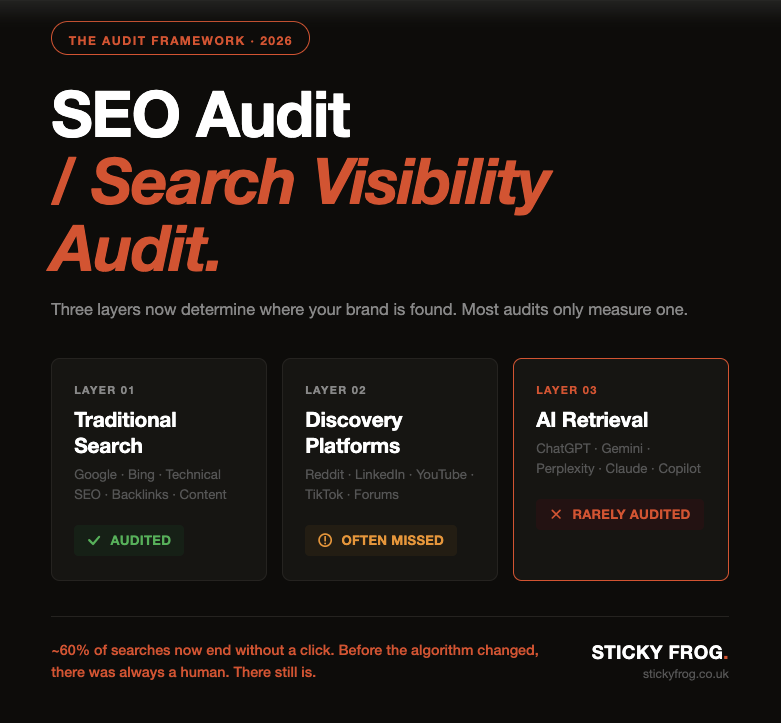

The phrase “search visibility audit” is replacing “SEO audit” in how the most forward-thinking practitioners frame their work, and the reason is practical, not philosophical. A search visibility audit is designed to measure presence across the full ecosystem where buyers encounter a brand. An SEO audit was designed to measure presence in one channel.

The Search Visibility Framework at Sticky Frog is structured around this distinction because it has become impossible to give a brand an accurate account of its visibility without accounting for all three layers: traditional search health, AI search presence, and discovery platform signals. Audits that cover only the first layer are not wrong. They are incomplete, and incomplete is a different kind of problem.

This matters because the consequences of that incompleteness are invisible in the data most marketing teams look at every day. Google Search Console does not tell you that your brand is absent from AI Overviews. GA4 does not show you that a competitor is being cited by ChatGPT in answer to the question your buyer just asked. The gap exists. It compounds. It does not show up until the pipeline starts to thin.

A search visibility audit makes that gap visible. That is its job.

Why an Audit Looks Different Depending on the Business

One of the persistent failures in how audits are structured is that they treat every business the same. A standard crawl report, a list of technical issues, a keyword gap analysis. The format rarely changes regardless of the sector, the buyer journey, or the channels that actually drive decisions for that specific audience.

The reality is that where a business is found, and therefore what a useful audit needs to measure, varies significantly by type.

Consider an ecommerce retailer. Their visibility priority is product-level. Product schema, structured data for pricing and availability, presence in Google Shopping, merchant centre feeds, and increasingly, whether AI Overviews are surfacing their products in response to commercial queries. A search visibility audit for this business spends significant time on schema compliance, product page technical performance, and AI Overview presence for transactional terms. It also examines whether reviews are appearing in AI-generated responses and whether third-party retail platforms are outranking the brand’s own product pages in both traditional and AI search.

A professional services firm operates on entirely different logic. The buyer journey is longer. Trust is established through credibility signals, not product feeds. Working with EY on content strategy made this pattern clear: the audit for a firm of that kind is fundamentally an authority audit. It measures whether the brand’s thought leadership content is structured to be retrievable by AI systems, whether named experts have sufficient entity signals for language models to understand who they are and why they are credible, and whether the firm appears in AI-generated answers to the high-value questions its target audience is asking.

A healthcare brand, or any business operating in a YMYL category, faces a different challenge again. The stakes of misinformation are higher, and AI systems treat this accordingly. An audit for a healthcare brand needs to assess E-E-A-T signals with precision, examine how content is attributed to named qualified professionals, evaluate structured data for health-specific schema types, and determine whether the brand’s content is appearing in or excluded from AI Overviews for symptom, condition, and treatment queries.

A local business has a different set of priorities. NAP consistency across directories, Google Business Profile completeness and accuracy, localised service page structure, and presence in AI answers to local intent queries. “Best [service] in [city]” queries are now frequently answered by AI Overviews that pull from a combination of GBP data, local content, and third-party sources. A local business that scores well on traditional local SEO but has not structured its content for AI retrieval is losing ground that was once securely its own.

The shape of a search visibility audit is determined by the shape of the buyer journey. The tool is the same. The output is not.

There is a useful parallel in how musicians talk about their craft. A track does not reach an audience through argument. It reaches them through repetition, association, and the emotional context in which they first heard it. By the time someone calls a song their favourite, the process that got them there is invisible even to them. The best audit work operates on the same principle, not producing a document that explains your brand to the algorithm, but mapping the full set of conditions under which a buyer will encounter, recognise, and trust you before they have consciously decided to look. That process starts long before the search. The audit should account for all of it.

The Three Layers a 2026 Audit Must Cover

A comprehensive search visibility audit in 2026 must cover three distinct layers: traditional search health, AI search presence, and discovery platform signals. Most audits cover the first in detail, gesture toward the second, and ignore the third entirely. That gap is where brands are losing ground right now.

The first layer is traditional search health. Technical infrastructure, crawlability, indexation, on-page signals, Core Web Vitals, internal linking architecture, backlink quality. These are the foundations. They remain important. They are no longer sufficient.

Within this layer, the technical standards have tightened. According to the HTTP Web Almanac, 43 percent of sites still fail Core Web Vitals. A Largest Contentful Paint above 2.5 seconds, a Cumulative Layout Shift above 0.1, or an Interaction to Next Paint above 200 milliseconds will cost a site in rankings and in conversion. A useful audit quantifies these against current thresholds and connects the gap to business impact, not just a technical score. Screaming Frog remains the audit standard for crawl-level technical analysis, run alongside Google Search Console for performance data, PageSpeed Insights for Core Web Vitals measurement, and GA4 for behavioural context.

Structured data compliance is the other technical area where most audits are underdelivering. Schema markup is the mechanism by which content signals its meaning to both search engines and AI systems. An audit needs to verify not just that schema exists, but that it is accurate, complete, and deployed correctly across the full range of relevant types for that business. The Google Rich Results Test surfaces errors. The audit should also check whether those schema types are actually improving search result appearance and whether they are feeding AI retrieval accurately.

The second layer is AI search presence. This is where most businesses have the largest and least-measured gap. The audit must assess whether the brand appears in AI Overviews for high-value queries, whether its content is structured in a way that language models can retrieve and cite, and whether the entity signals associated with the brand are strong enough for AI systems to understand what it is, who it serves, and why it is credible.

Answer Engine Optimisation (AEO) and Generative Engine Optimisation (GEO) are the disciplines that address this layer. AEO is concerned with structuring content to answer specific questions directly, the kind of passage-level, self-contained answers that AI systems extract and surface. GEO is concerned with the broader strategies that increase the probability of a brand being cited or referenced in AI-generated content. Both require a different content architecture than traditional SEO. The audit must evaluate both as measurable gaps with specific remediation paths.

The third layer is discovery platform signals. Reddit, Quora, YouTube, LinkedIn, industry forums, sector communities. These platforms now feed AI systems directly. A brand that is absent from the conversations where its buyers are active is absent from the AI outputs those conversations inform. That absence does not show up in Google Search Console. It does not surface in a standard audit. But it is real, and it compounds over time.

Research by Semrush found that Reddit in particular has seen a significant increase in Google visibility over the past two years, a direct result of Google’s decision to surface community content more prominently in results, including as sources for AI Overviews. A brand not present in Reddit threads on its category is not just missing a social channel. It may be absent from the source material that informs how AI systems understand the category and who operates within it.

If you want to understand exactly where your brand sits across all three layers of modern search, the free AI Visibility Snapshot at stickyfrog.co.uk is the starting point.

What a Roadmap Actually Looks Like

A well-structured search visibility audit closes with a prioritised action plan mapped to business outcomes, not a list of technical recommendations sorted by severity. That plan should tell the marketing team what to fix first, why it matters in terms they recognise, and what the realistic timeline looks like given the resources the business actually has.

The audit should identify wins across three time horizons. Short-term wins are the technical fixes that unblock existing visibility: resolving crawl errors, fixing broken canonical chains, correcting schema validation failures, improving page speed on high-traffic templates. Medium-term priorities are content and structural changes: building out FAQ and passage-level content optimised for AI retrieval, improving internal linking architecture, developing community platform presence. Longer-term strategic work includes entity building, authority content development, and structured AI visibility monitoring across ChatGPT, Perplexity, and Google AI Overviews.

The prioritisation model matters. A finding that says “fix duplicate meta descriptions across 312 pages” is accurate. It is not actionable unless it is connected to which of those 312 pages drive the most organic entry traffic, which are closest to ranking for high-intent terms, and which are appearing in AI Overviews without optimal descriptions. That context turns a list into a priority.

The Search Visibility Strategy Audit at Sticky Frog is structured around this framework. The output is a prioritised roadmap with business-context framing. Not a technical inventory delivered without direction.

The Conversation Most Audits Never Have

The most important question a search visibility audit can answer is not what is broken. It is what is stopping growth, and what does fixing it actually require. That question demands a conversation about the business, its audience, its competitive position, its content gaps, and its internal capacity to act on recommendations. Most audits skip that conversation entirely.

An audit produced without understanding the commercial context of the business it is assessing will produce findings that are technically correct and strategically inert. It will identify the same issues any crawl tool would surface. It will not explain why organic visibility has plateaued while the category is growing. It will not tell you why a competitor with a technically weaker site is consistently present in AI search results and you are not.

Those questions require judgment, not just data. The thinking behind how that judgment gets applied is set out in detail in the essay that sits at the foundation of this work, and it starts from the same premise: the human at the end of the query has not changed.

The Human Algorithm is built on the premise that the most durable search strategies start from the human on the other end of the query. AI search makes that more true, not less. The buyer has not changed. The path they take to find you has.

How to Commission a Search Visibility Audit That Produces Decisions

The obvious counterargument here is worth acknowledging directly. An audit that covers AI search presence and discovery platform signals sounds thorough in theory, but any marketing team still needs to justify budget against measurable outcomes. If AI visibility does not produce sessions, and sessions do not produce revenue, why invest in measuring something that does not appear in a report?

The answer is that the report is the problem, not the visibility. Branded search volume, direct session growth, and revenue stability are all measurable now, in GA4 and Search Console, without new tools. What changes is the interpretation. A brand with declining organic sessions and growing branded search is not failing at search. It is succeeding at influence through a channel the old reporting framework was not built to see. The audit’s job is to make that visible, and to give you the language to explain it internally to leadership.

To commission a search visibility audit that results in action rather than a shelved document, the brief needs to specify business outcomes, not just technical scope. Define what growth looks like, qualified traffic, AI search visibility, conversion from organic, and ask the provider to structure their findings and recommendations around those outcomes explicitly.

Before you commission anything, ask one question: what will this audit tell me that I cannot already see in my analytics and Search Console data? If the answer is “a more complete picture of what is broken,” that is a diagnostic. Useful, but limited. If the answer is “a prioritised roadmap for where to invest to achieve specific outcomes,” that is worth the investment.

The scoping conversation should cover all three layers. Ask whether the audit will assess AI Overview presence for your primary commercial queries. Ask whether it will evaluate your content’s structure for AI retrieval. Ask whether it will examine your brand’s presence on the discovery platforms that feed AI systems.

The recommended audit cadence for most businesses is a full search visibility audit twice a year, with monthly monitoring of Core Web Vitals, page speed, crawl health, and AI Overview presence for key terms. An annual audit is no longer adequate. The monthly checks are lightweight, but they catch the drift before it becomes a problem.

The way search is measured is changing faster than the formats used to measure it. The brands that close that gap fastest will not necessarily be the ones with the largest budgets. They will be the ones that asked the right questions before the work began.

Before the algorithm changed, there was always a business trying to reach a human. There still is. The audit should be built around that.

Frequently Asked Questions

What should a good SEO audit include in 2026?

A good search visibility audit in 2026 covers three layers: traditional search health (technical infrastructure, Core Web Vitals, schema compliance, backlinks), AI search presence (AI Overview visibility, AEO and GEO content structure, entity signals), and discovery platform signals (presence in Reddit, Quora, LinkedIn, and community platforms that feed AI systems). Audits covering only the first layer are producing an incomplete picture of where visibility is being won or lost.

What is the difference between an SEO audit and a search visibility audit?

A traditional SEO audit focuses on technical and on-page factors within Google search. A search visibility audit assesses presence across all channels where buyers discover and evaluate a brand, including traditional search, AI-generated results, and the community platforms that inform both. The latter is the more accurate and useful scope for most businesses in 2026.

What tools should be used in a 2026 SEO audit?

A comprehensive audit uses Screaming Frog for in-depth crawl analysis of server performance, crawlability, and schema markup; Google Search Console for performance and indexation data; PageSpeed Insights for Core Web Vitals measurement; and GA4 for behavioural and conversion context. AI Overview presence should be tested manually across ChatGPT, Perplexity, and Google AI Overviews. No single tool covers all three layers.

What are Core Web Vitals and why do they matter for an audit?

Core Web Vitals are Google’s standardised metrics for user experience: Largest Contentful Paint (load speed, passing threshold 2.5 seconds), Cumulative Layout Shift (visual stability, passing threshold 0.1), and Interaction to Next Paint (responsiveness, passing threshold 200 milliseconds). According to the HTTP Web Almanac, 43 percent of sites still fail these thresholds. The audit should measure all three and connect any failures to pages with the highest commercial impact.

Why do so many SEO audits never get fully implemented?

Most SEO audits are written for an SEO specialist, not for the marketing leader commissioning them. When findings are not connected to business outcomes and prioritised in commercial terms, the marketing team cannot translate them into confident decisions. A search visibility audit structured around business outcomes and a prioritised roadmap produces implementation because every recommendation connects to something the business recognises.

How has AI search changed what an audit needs to cover?

AI Overviews, ChatGPT, Perplexity, and Gemini now intercept a significant proportion of search journeys before a user reaches a traditional result. An audit that does not assess whether content is structured for AI retrieval, whether entity signals are clear enough for language models to understand and cite the brand, and whether the brand appears in AI-generated answers for its key queries, is missing a substantial and growing part of the visibility picture.

What are discovery platform signals and why do they matter?

Discovery platform signals refer to a brand’s presence across Reddit, Quora, LinkedIn, YouTube, and sector-specific communities. These platforms are used as source material by AI systems when generating answers. A brand absent from the conversations where its buyers are active may also be absent from AI-generated answers informed by those conversations. Semrush data shows Reddit’s visibility in Google search has grown significantly, including as a source for AI Overviews.

How does an audit differ between an ecommerce brand and a professional services firm?

An ecommerce audit prioritises product schema, pricing and availability structured data, merchant centre feed health, and AI Overview presence for transactional queries. A professional services audit focuses on entity authority, thought leadership content structured for AI retrieval, named expert signals, and appearance in AI answers to high-value advisory questions. The tools and framework are similar. The priorities and shape of the roadmap are different.

How often should a search visibility audit be conducted?

A full search visibility audit should be conducted twice a year. Monthly monitoring should cover Core Web Vitals, page speed, crawl health, and AI Overview presence for primary commercial terms. Waiting twelve months between audits is no longer adequate given the pace of change in AI search behaviour and algorithm updates.

How do I commission an audit that produces decisions rather than a document?

Define business outcomes before scoping the work: qualified traffic, AI search visibility, conversion from organic. Ask the provider to structure findings around those outcomes with prioritised actions and realistic timelines. Ask specifically whether the scope covers all three layers: traditional search health, AI search presence, and discovery platform signals. A brief that specifies only technical scope will produce a technical output. A brief that specifies business outcomes will produce a roadmap.

Founder & Author within Sticky Frog and creator of The Human Algorithm. 15 years of SEO experience spanning early-stage startups, scale-ups, and enterprise brands including Toyota Europe, Bupa, EY, Citibank, Deliveroo, and American Express, he specialises in AI search visibility, entity SEO, and search strategy for the era where clicks are declining but influence is not. Get found for what you do best.